Internship Microsoft Research

Researcher Intern - Human Experience DesignInteraction Design research intern

Project Tokyo

Microsoft research, Cambridge, United Kingdom

Duration

6 months

Seen in:

Blog post on Microsoft news

Inclusive design, Assistive technology, Ethical AI, Social impact, design for learning

The content in on this page is confirmed to be non-confidental and based on the project page of Tokyo and the blog article about this project. Pictures are made by Jonathan Banks.

MICROSOFT RESEARCH INTERNSHIP

During my internship at Microsoft research I joined the Project Tokyo team.

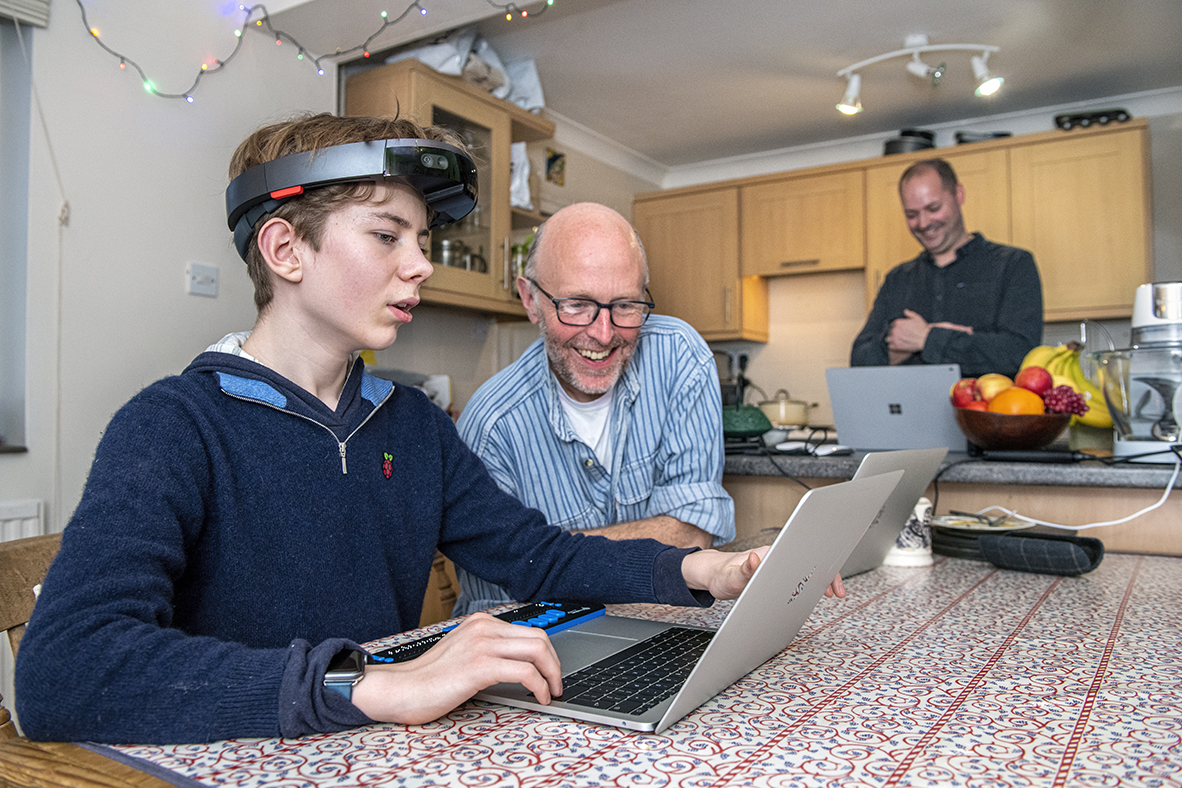

In project Tokyo we researched how agent technologies can help to augment people’s own abilities, by amplifying their existing skills. During my internship we worked closely with children who are blind, since the technology could help them understand social behaviours and contexts through embodied interaction.

This is important since body language and understanding of social contexts is helping blind children’s social development.

"Geert has been with us for 6 months, focussed initially on AI and Ethics. This has been a tough area for us to explore in the past because of the complexities around the ethical questions raised by the technology we are working with. Geert has avoided being bogged down by this issue, and instead has opened up a whole new space around the reciprocity of AI systems that is a really rich area of research for us, and likely of interest to product partners such as HoloLens, too. He explored this space through the creation of a rich set of prototypes that are both physical and digital, and at lots of different levels of implementation, from tangible, non-working prototypes, to working systems that are plugged into our Project system." - Richard Banks, Principal Design Manager, Microsoft research Cambridge

Who's there?

The wearer of the system can hear who is in front of him/her by the name being called out by the system. In this way it helps the wearer understand who’s around him/her, but also helps with understanding that it is important to use your body while talking to people and creates a more open posture; which than also helps the others to know that the wearer is interested and ‘paying attention’ to hem. Which is a different way to understand in-action than by adults telling this.

Am I being seen?

A white light tracks the person closest to the user and turns green when the person has been identified to the user. The feature lets communication partners or bystanders know they’ve been seen, making it more natural to initiate a conversation. The LED strip also provides people an opportunity to move out of the device’s field of view and not be seen, if they so choose. “When you know you are about to be seen, you can also decide not to be seen,”.

Reciprocal interaction

My role was to work with dynamic consent; how do we deal with computer vision technology when we want some people to be seen by the system and other people might be unknown or anonymous. This led to the first and most important part; How do you know that you have been seen; and the wearer of the device is notified. For this I prototyped experiences that could be built on top of the HoloLens experience.

Research workshops

To understand how the prototypes would function in social scenarios we worked on different games to trigger these interactions. One was where the wearer could lead the conversation with his gaze; only when he was looking at someone they could talk. This led to interesting situations and made us understand how people deal with the system uncertainty in better ways.

Hardware/research support

Besides the project on reciprocal interaction I helped out on the research part (trying to make sense of the research sessions that we did by analysing video and summarizing it), working on hardware (modifying HoloLenses) and communication material for f.e. parental consent and presenting the project/research internally.